A Priority Scorecard for Investigating ED/ESD Programs

Based on the article: “Is ESD a Scam? Over-Mentored, Under-Funded” by Tiisetso Maloma (https://www.tiisetsomaloma.co.za/2025/07/25/is-esd-a-scam/) – refer to the full article for detailed notes and examples.A Priority Scorecard for Investigating ED/ESD Programs

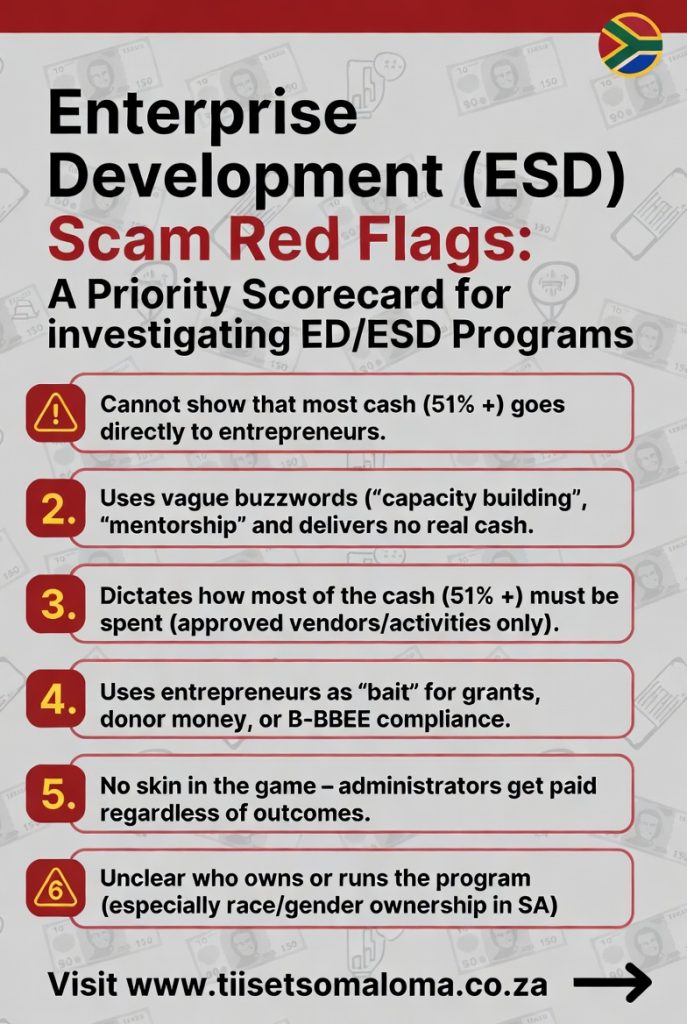

Priority 1: The Ultimate Test – Where the Money Actually Goes

1. Cannot demonstrate that 51–80%+ of cash or cash-equivalents goes directly to entrepreneurs.

- The program lacks audited, transparent numbers proving the majority of funds reach founders as real. Most money demonstrably stays with the program, consultants, corporate staff, or overhead.

- Why it matters: This is the definitive test of genuine support vs. extraction.

2. Heavy use of vague, extractive language AND the claimed “support” is not delivered as cash.

- Language red flag: Websites and reports are filled with buzzwords like “capacity building”, “ecosystem development”, “mentorship & training”, “awareness raising”, “multi-stakeholder collaboration”, “pre-investment readiness” – sounding positive but delivering mostly workshops and advice instead of real capital.

- Cash red flag: The program reports delivering value to entrepreneurs but provides it as in-kind support, vouchers, training packages, or paid consultants – not actual spendable money in the founder’s hands.

- Why it matters: This is the classic “over-mentored, under-funded” pattern. The vague language creates the illusion of value while the non-cash delivery means entrepreneurs receive little to no real capital they can deploy according to market needs.

3. The program dictates how 51%+ of the cash and equivalents must be spent by the entrepreneur.

- They openly cap direct entrepreneur funding at barely over half, and then rigidly dictate exactly how that portion must be used (e.g., only for specific approved expenses, vendors, or activities), removing real decision-making power from the entrepreneur. Often framed as “reasonable”, “structured”, or “best practice”.

- Why it matters: Legitimate programs aim to maximize flexible, direct capital to entrepreneurs – not openly cap it low and then control the spending. The entrepreneur, not the program, knows best what their business needs.

Priority 2: Structural & Behavioral Indicators (The “Industrial Complex” Pattern)

These strongly reinforce the Priority 1 flags and reveal the system that protects extraction.

4. Entrepreneurs are used primarily as “bait” for grants, donor money, corporate sponsorships, or B-BBEE compliance points.

- Mass recruitment of founders (especially black-owned, youth, women, township/rural) to attract funding/PR, but they receive mostly “crumbs” – advice, certificates, small events.

- Why it matters: Entrepreneurs become line items in funding reports, not genuine beneficiaries.

5. No skin in the game for program administrators.

- Consultants, trainers, and corporate staff operate on secure, fully funded salaries regardless of outcomes. The entrepreneur bears all the risk of business failure.

- Why it matters: As Nassim Taleb notes, bureaucracy separates people from the consequences of their actions. (The article references this concept.)

Priority 3: Transparency & Accountability Failures

These make it difficult or impossible to verify genuine impact.

6. Unclear or opaque ownership/structure of the ESD-providing organisation (especially regarding gender/race in SA B-BBEE context).

- No clear disclosure of who owns, founded, or runs the program and their genuine transformation credentials.

- Why it matters: Lack of transparency often hides that the intermediary benefits insiders more than intended entrepreneurs.

7. Vague or misleading “impact” metrics.

- Focus on “number trained”, “workshops held”, “participants engaged”, or “awareness raised” instead of real outcomes: revenue growth, jobs created, business survival rates after 2–3 years, or actual capital deployed.

- Why it matters: These metrics measure activity, not results.

8. Lack of verifiable, independent long-term success stories.

- Few or no genuinely independent, verifiable successes. Common entrepreneur feedback includes “waste of time”, “theory only”, or “no real money”.

- Why it matters: Real programs produce real, traceable success stories.

Priority 4: Operational & Targeting Red Flags (Lower confidence alone, but confirmatory)

9. Little to no direct, scalable funding for real businesses.

- Emphasis on non-financial “support” while organisers secure large multi-year contracts. Entrepreneurs may even pay fees or cover their own costs.

10. Donor/government dependence with short cycles, bureaucracy, and repeated similar programs.

- Heavy reliance on fluctuating external funding that prioritises reporting and compliance over results. Endless rebranded versions of the same workshops.

11. Over-promising macro outcomes through top-down “ecosystems” or “frameworks”.

- Claims that workshops and “multi-stakeholder collaboration” will solve big problems (transformation, inclusion) while ignoring bottom-up realities like access to real capital.

12. Targeting inexperienced or vulnerable entrepreneurs with hype.

- Aggressive marketing to beginners or vulnerable groups without requiring proven traction, while experienced founders quietly avoid the program.

How to Use This Scorecard (Priority Order)

Step 1 – Apply Priority 1 flags first (must-pass test):

- If any of flags 1–3 are present ? Strong scam/Industrial Complex signal. Treat with extreme skepticism or avoid.

Step 2 – Score remaining flags (4–12) to assess severity:

- 0–3 additional flags (beyond Priority 1) ? High caution. Likely extractive or low-value. Verify independently with hard numbers.

- 4+ additional flags ? Very strong ESD scam / Industrial Complex signal. Avoid.

South Africa context:

- Cross-check against B-BBEE Commission reports, which have repeatedly highlighted low ESD spending effectiveness, under-funding of SMMEs, and compliance-focused spending that fails to deliver real transformation to black-owned businesses.

- For the full analysis, examples (including the “Farmer and the Middlemen” story), and first-principles solutions, read the original article: [Is ESD a Scam?](https://www.tiisetsomaloma.co.za/2025/07/25/is-esd-a-scam/?srsltid=AfmBOoppPTFHwJulM-VpcACZI5dhKDKD1ecWK4mqscOFQWGgs8JoJ8UL)